新智元報導

來源:TensorFlow

作者:Jonathan Shen 編輯:肖琴

【新智元導讀】谷歌近日開源了一個強大的NLP深度學習框架Lingvo,側重於語言相關任務的序列模型,如機器翻譯、語音識別和語音合成。過去兩年來,谷歌已經發表了幾十篇使用Lingvo獲得SOTA結果的論文。

近日,谷歌開源了一個內部 NLP 的秘密武器 ——Lingvo。

這是一個強大的 NLP 框架,已經在谷歌數十篇論文的許多任務中實現 SOTA 性能!

Lingvo 在世界語中意為 “語言”。這個命名暗指了 Lingvo 框架的根源 ——它是使用 TensorFlow 開發的一個通用深度學習框架,側重於語言相關任務的序列模型,如機器翻譯、語音識別和語音合成。

Lingvo 框架在谷歌內部已經獲得青睞,使用它的研究人員數量激增。過去兩年來,谷歌已經發表了幾十篇使用 Lingvo 獲得 SOTA 結果的論文,未來還會有更多。

包括 2016 年機器翻譯領域里程碑式的《

谷歌神經機器翻譯系統

》論文 (Google’s Neural Machine Translation System: Bridging the Gap between Human and Machine Translation),也是使用 Lingvo。該研究開啟了機器翻譯的新篇章,宣告機器翻譯正式從 IBM 的統計機器翻譯模型 (PBMT,基於短語的機器翻譯),過渡到了神經網絡機器翻譯模型。該系統使得機器翻譯誤差降低了 55%-85% 以上,極大地接近了普通人的翻譯水準。

除了機器翻譯之外,Lingvo 框架也被用於語音識別、語言理解、語音合成、語音 - 文本轉寫等任務。

谷歌列舉了 26 篇使用 Lingvo 框架的 NLP 論文,發表於 ACL、EMNLP、ICASSP 等領域頂會,取得多個 SOTA 結果。全部論文見文末列表。

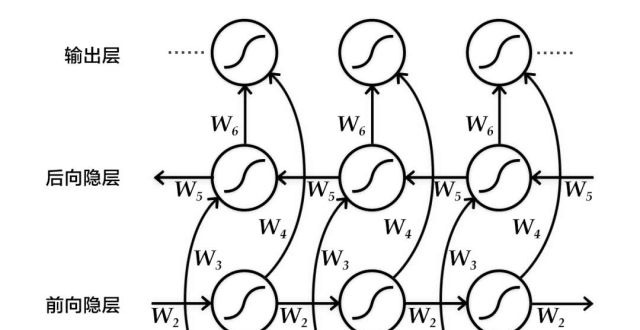

Lingvo 支持的架構包括傳統的RNN 序列模型、Transformer 模型以及包含 VAE 組件的模型,等等。

谷歌表示:“為了表明我們對研究界的支持並鼓勵可重複的研究工作,我們公開了該框架的源代碼,並開始發布我們論文中使用的模型。”

此外,谷歌還發布了一篇概述 Lingvo 設計的論文,並介紹了框架的各個部分,同時提供了展示框架功能的高級特性的示例。

相關論文:

https://arxiv.org/pdf/1902.08295.pdf

摘要

Lingvo 是一個 Tensorflow 框架,為協作式深度學習研究提供了一個完整的解決方案,特別側重於 sequence-to-sequence 模型。Lingvo 模型由靈活且易於擴展的模塊化構建塊組成,實驗配置集中且高度可定製。該框架直接支持分布式訓練和量化推理,包含大量實用工具、輔助函數和最新研究思想的現有實現。論文概述了 Lingvo 的基礎設計,並介紹了框架的各個部分,同時提供了展示框架功能的高級特性的示例。

為協作研究設計、靈活、快速

Lingvo 是在考慮協作研究的基礎下構建的,它通過在不同任務之間共享公共層的實現來促進代碼重用。此外,所有層都實現相同的公共接口,並以相同的方式布局。這不僅可以生成更清晰、更易於理解的代碼,還可以非常簡單地將其他人為其他任務所做的改進應用到自己的任務中。強製實現這種一致性的代價是需要更多的規則和樣板,但是 Lingvo 試圖將其最小化,以確保研究期間的快速迭代時間。

協作的另一個方面是共享可重現的結果。Lingvo 為檢入模型超參數配置提供了一個集中的位置。這不僅可以記錄重要的實驗,還可以通過訓練相同的模型,為其他人提供一種簡單的方法來重現你的結果。

雖然 Lingvo 最初的重點是 NLP,但它本質上非常靈活,並且研究人員已經使用該框架成功地實現了圖像分割和點雲分類等任務的模型。它還支持 Distillation、GANs 和多任務模型。

同時,該框架不犧牲速度,並且具有優化的輸入 pipeline 和快速分布式訓練。

最後,Lingvo 的目的是實現簡單生產,甚至有一條明確定義的為移動推理移植模型的路徑。

使用Lingvo的已發表論文列表

Translation:

The Best of Both Worlds: Combining Recent Advances in Neural Machine Translation.Mia X. Chen, Orhan Firat, Ankur Bapna, Melvin Johnson, Wolfgang Macherey, George Foster, Llion Jones, Mike Schuster, Noam Shazeer, Niki Parmar, Ashish Vaswani, Jakob Uszkoreit, Lukasz Kaiser, Zhifeng Chen, Yonghui Wu, and Macduff Hughes. ACL 2018.

Revisiting Character-Based Neural Machine Translation with Capacity and Compression.Colin Cherry, George Foster, Ankur Bapna, Orhan Firat, and Wolfgang Macherey. EMNLP 2018.

Training Deeper Neural Machine Translation Models with Transparent Attention.Ankur Bapna, Mia X. Chen, Orhan Firat, Yuan Cao and Yonghui Wu. EMNLP 2018.

Google's Neural Machine Translation System: Bridging the Gap between Human and Machine Translation.Yonghui Wu, Mike Schuster, Zhifeng Chen, Quoc V. Le, Mohammad Norouzi, Wolfgang Macherey, Maxim Krikun, Yuan Cao, Qin Gao, Klaus Macherey, Jeff Klingner, Apurva Shah, Melvin Johnson, Xiaobing Liu, ?ukasz Kaiser, Stephan Gouws, Yoshikiyo Kato, Taku Kudo, Hideto Kazawa, Keith Stevens, George Kurian, Nishant Patil, Wei Wang, Cliff Young, Jason Smith, Jason Riesa, Alex Rudnick, Oriol Vinyals, Greg Corrado, Macduff Hughes, and Jeffrey Dean. Technical Report, 2016.

Speech Recognition:

A comparison of techniques for language model integration in encoder-decoder speech recognition.Shubham Toshniwal, Anjuli Kannan, Chung-Cheng Chiu, Yonghui Wu, Tara N. Sainath, Karen Livescu. IEEE SLT 2018.

Deep Context: End-to-End Contextual Speech Recognition.Golan Pundak, Tara N. Sainath, Rohit Prabhavalkar, Anjuli Kannan, Ding Zhao. IEEE SLT 2018.

Speech recognition for medical conversations.Chung-Cheng Chiu, Anshuman Tripathi, Katherine Chou, Chris Co, Navdeep Jaitly, Diana Jaunzeikare, Anjuli Kannan, Patrick Nguyen, Hasim Sak, Ananth Sankar, Justin Tansuwan, Nathan Wan, Yonghui Wu, and Xuedong Zhang. Interspeech 2018.

Compression of End-to-End Models.Ruoming Pang, Tara Sainath, Rohit Prabhavalkar, Suyog Gupta, Yonghui Wu, Shuyuan Zhang, and Chung-Cheng Chiu. Interspeech 2018.

Contextual Speech Recognition in End-to-End Neural Network Systems using Beam Search.Ian Williams, Anjuli Kannan, Petar Aleksic, David Rybach, and Tara N. Sainath. Interspeech 2018.

State-of-the-art Speech Recognition With Sequence-to-Sequence Models.Chung-Cheng Chiu, Tara N. Sainath, Yonghui Wu, Rohit Prabhavalkar, Patrick Nguyen, Zhifeng Chen, Anjuli Kannan, Ron J. Weiss, Kanishka Rao, Ekaterina Gonina, Navdeep Jaitly, Bo Li, Jan Chorowski, and Michiel Bacchiani. ICASSP 2018.

End-to-End Multilingual Speech Recognition using Encoder-Decoder Models.Shubham Toshniwal, Tara N. Sainath, Ron J. Weiss, Bo Li, Pedro Moreno, Eugene Weinstein, and Kanishka Rao. ICASSP 2018.

Multi-Dialect Speech Recognition With a Single Sequence-to-Sequence Model.Bo Li, Tara N. Sainath, Khe Chai Sim, Michiel Bacchiani, Eugene Weinstein, Patrick Nguyen, Zhifeng Chen, Yonghui Wu, and Kanishka Rao. ICASSP 2018.

Improving the Performance of Online Neural Transducer Models.Tara N. Sainath, Chung-Cheng Chiu, Rohit Prabhavalkar, Anjuli Kannan, Yonghui Wu, Patrick Nguyen, and Zhifeng Chen. ICASSP 2018.

Minimum Word Error Rate Training for Attention-based Sequence-to-Sequence Models.Rohit Prabhavalkar, Tara N. Sainath, Yonghui Wu, Patrick Nguyen, Zhifeng Chen, Chung-Cheng Chiu, and Anjuli Kannan. ICASSP 2018.

No Need for a Lexicon? Evaluating the Value of the Pronunciation Lexica inEnd-to-End Models.Tara N. Sainath, Rohit Prabhavalkar, Shankar Kumar, Seungji Lee, Anjuli Kannan, David Rybach, Vlad Schogol, Patrick Nguyen, Bo Li, Yonghui Wu, Zhifeng Chen, and Chung-Cheng Chiu. ICASSP 2018.

Learning hard alignments with variational inference.Dieterich Lawson, Chung-Cheng Chiu, George Tucker, Colin Raffel, Kevin Swersky, and Navdeep Jaitly. ICASSP 2018.

Monotonic Chunkwise Attention.Chung-Cheng Chiu, and Colin Raffel. ICLR 2018.

An Analysis of Incorporating an External Language Model into a Sequence-to-Sequence Model.Anjuli Kannan, Yonghui Wu, Patrick Nguyen, Tara N. Sainath, Zhifeng Chen, and Rohit Prabhavalkar. ICASSP 2018.

Language understanding

Semi-Supervised Learning for Information Extraction from Dialogue.Anjuli Kannan, Kai Chen, Diana Jaunzeikare, and Alvin Rajkomar. Interspeech 2018.

CaLcs: Continuously Approximating Longest Common Subsequence for Sequence Level Optimization.Semih Yavuz, Chung-Cheng Chiu, Patrick Nguyen, and Yonghui Wu. EMNLP 2018.

Speech synthesis

Hierarchical Generative Modeling for Controllable Speech Synthesis.Wei-Ning Hsu, Yu Zhang, Ron J. Weiss, Heiga Zen, Yonghui Wu, Yuxuan Wang, Yuan Cao, Ye Jia, Zhifeng Chen, Jonathan Shen, Patrick Nguyen, Ruoming Pang. Submitted to ICLR 2019.

Transfer Learning from Speaker Verification to Multispeaker Text-To-Speech Synthesis.Ye Jia, Yu Zhang, Ron J. Weiss, Quan Wang, Jonathan Shen, Fei Ren, Zhifeng Chen, Patrick Nguyen, Ruoming Pang, Ignacio Lopez Moreno, Yonghui Wu. NIPS 2018.

Natural TTS Synthesis By Conditioning WaveNet On Mel Spectrogram Predictions.Jonathan Shen, Ruoming Pang, Ron J. Weiss, Mike Schuster, Navdeep Jaitly, Zongheng Yang, Zhifeng Chen, Yu Zhang, Yuxuan Wang, RJ Skerry-Ryan, Rif A. Saurous, Yannis Agiomyrgiannakis, Yonghui Wu. ICASSP 2018.

On Using Backpropagation for Speech Texture Generation and Voice Conversion.Jan Chorowski, Ron J. Weiss, Rif A. Saurous, Samy Bengio. ICASSP 2018.

Speech-to-text translation

Leveraging weakly supervised data to improve end-to-end speech-to-text translation.Ye Jia, Melvin Johnson, Wolfgang Macherey, Ron J. Weiss, Yuan Cao, Chung-Cheng Chiu, Naveen Ari, Stella Laurenzo, Yonghui Wu. Submitted to ICASSP 2019.

Sequence-to-Sequence Models Can Directly Translate Foreign Speech.Ron J. Weiss, Jan Chorowski, Navdeep Jaitly, Yonghui Wu, and Zhifeng Chen. Interspeech 2017.

https://github.com/tensorflow/lingvo/blob/master/PUBLICATIONS.md

開源地址:

https://github.com/tensorflow/lingvo

【加入社群】

新智元AI技術+產業社群招募中,歡迎對AI技術+產業落地感興趣的同學,加小助手微信號:aiera2015_2入群;通過審核後我們將邀請進群,加入社群後務必修改群備注(姓名 - 公司 - 職位;專業群審核較嚴,敬請諒解)。